Credit: NSF

When was NSF established?

The U.S. National Science Foundation was established as a federal agency in 1950 when President Harry S. Truman signed Public Law 81-507, the "National Science Foundation Act of 1950."

Since then, NSF has supported basic research — research driven by curiosity and discovery — at colleges, universities and other organizations across the country for over seven decades.

While NSF has grown and evolved since 1950, its mission has remained the same: "To promote the progress of science; to advance the national health, prosperity, and welfare; and to secure the national defense; and for other purposes."

Why was NSF formed?

The seeds for NSF were planted years before it was established, when President Franklin D. Roosevelt recognized the crucial role that the scientific enterprise was playing in the Allied success in World War II.

Before World War II, the federal government played a minor role in supporting research at U.S. colleges and universities. Instead, research institutions relied on philanthropic endowments or funding from private companies, often with vested interests. "Curiosity-driven" science, a cornerstone of discovery and innovation, was stymied in the process.

In November 1944, thinking ahead to the end of the war, Roosevelt wrote to director of the Office of Scientific Research and Development Vannevar Bush, asking how the successful application of scientific knowledge to wartime problems could be carried over into peacetime — and requesting recommendations on a national policy for science.

In 1945, Bush presented his report, "Science: The Endless Frontier," to Truman. The report envisioned a new agency whose mission would promote the progress of science by supporting basic research at colleges and universities.

In 1950, following a series of bill revisions, Congress passed and Truman signed Public Law 81-507, establishing NSF and the National Science Board. The agency's place in history was cemented.

How has NSF changed?

NSF has grown and adapted to meet societal needs in the decades since it was formed. To advance the performance of research, new directorates and programs have been created and new awards developed.

Some notable changes across the agency over the last 70 years include an expanded portfolio charged with broadening participation in science, technology, engineering and mathematics. In 1952, for example, NSF funded fellowships for graduate students. Today, the NSF Directorate for STEM Education has grown to support students and teachers at all grade levels, strengthening STEM learning through initiatives that promote access.

New directorates have also been established at NSF: Engineering in 1981; Computer and Information Science and Engineering in 1985/86; and Social, Behavioral and Economic Sciences in 1991. And in 2022, the NSF Directorate for Technology, Innovation and Partnerships — its first new directorate in 30 years — was formed to bridge curiosity-driven and use-inspired research and grow industry, create new jobs, and cultivate a highly skilled STEM workforce across the nation.

In the years to come, NSF will continue to grow and evolve to meet the challenges of the day. Yet NSF's mission "to promote the progress of science" remains steadfast.

NSF's history and impacts: A brief timeline

1944 – November 17

President Franklin D. Roosevelt writes Vannevar Bush, director of the Office of Scientific Research and Development, asking how the successful application of scientific knowledge to wartime problems could be carried over into peacetime.

1945 – July 5

Vannevar Bush presents a detailed report to President Harry S. Truman. Among the recommendations in "Science: The Endless Frontier" is the establishment of a foundation to oversee federal funding for basic scientific research and the training for workers in science. Read the report.

1945 – July 19

Senator Warren Magnuson introduces a bill to implement Bush's plan, the first of several offered. In later bills, the proposed name is the "National Science Foundation," a title first suggested by Senator Harley M. Kilgore in 1945.

1950 – May 10

Congress passes and President Harry S. Truman signs Public Law 81-507, creating NSF. The act provides for a National Science Board of 24 part-time members and a director as chief executive officer. The board's first meeting is held Dec. 12.

1951 – March

Alan T. Waterman, chief scientist at the Office of Naval Research, is nominated by President Harry S. Truman to become the first director of NSF. The agency is provided with an initial appropriation of $225,000.

1952 – February 1

The National Science Board approves the first 28 research grants to be awarded by NSF. The first grant, for $10,300, goes to the Institute for Cancer Research. A total of 97 research grants are awarded in the first year. Among the recipients is Max Delbruck (Nobel Prize in in physiology or medicine, 1969).

1952

NSF launches the Graduate Research Fellowship Program to support outstanding graduate students in NSF-supported STEM disciplines. It will become NSF's longest continuously operating program.

1955

The first planning grants are given for the establishment of national radio and optical astronomical observatories.

1956

NSF awards its first grants to the National Radio Astronomy Observatory and to what would become the Kitt Peak National Observatory. Construction begins on NRAO in Green Bank, West Virginia. The observatory is completed in 1962.

1957 – January 22

The South Pole station is officially dedicated. The U.S. has six scientific stations established in Antarctica, all funded by NSF. The 1957–58 International Geophysical Year was a global effort to collect data in many fields.

1957 – October 4

The Soviet Union launches Sputnik I, the first satellite, into orbit. This triggers a national self-appraisal of scientific research and education, and the U.S. Congress responds by more than doubling the NSF appropriation to $134 million, beginning July 1, 1958.

1959

NSF develops the National Center for Atmospheric Research. It will begin operating in 1960 in Boulder, Colorado, as an NSF program managed by the nonprofit University Corporation for Atmospheric Research.

1959 – August 25

President Dwight D. Eisenhower signs Public Law 86-209, establishing the National Medal of Science, awarded to individuals for their outstanding contributions to areas of science.

1960s

During the 1960s, NSF funds, in part, research that leads to the creation of data compression algorithms. The technology is first used for satellite transmissions but today is used in everyday items like TVs and computer hard drives, to name a few.

1963 – July 1

Leland J. Haworth becomes the second director of NSF (1963–1969).

1965

In the 1960s, an English professor and linguist at Gallaudet College, William Stokoe, begins to look at American Sign Language and discovers that it is full of regularities and structure, like a spoken language. With an NSF grant, he publishes the dictionary of ASL.

1968 – July 18

President Lyndon B. Johnson signs Public Law 90-407, amending the NSF authorization act, making explicit support for the social sciences and applied research, and charging NSF with fostering development of computer and other scientific technologies.

1969 – July 14

William David McElroy becomes the third director of NSF (1969–1972).

1969 – October 1

The Pentagon transfers ownership of Arecibo Observatory in Puerto Rico to NSF. This is one of the largest centers for research in radio astronomy, planetary radar and terrestrial aeronomy. The huge "dish" is 1,000 feet in diameter, 167 feet deep and covers an area of about 20 acres.

1970s

Research by NSF-funded scientists on solid modeling leads to widespread use of computer-aided design and computer-aided manufacturing, or CAD/CAM, which revolutionizes many manufacturing processes.

1970s

NSF helps fund barcode research, which, in turn, helps to perfect the accuracy of the scanners that read barcodes.

1970 – September 1

The Office for the International Decade of Ocean Exploration is established. This is one of several large-scale projects in oceanography that NSF has led or participated in.

1971

NSF's Division of Materials Research is established in 1971 to take over the Department of Defense's materials science portfolio, following the 1969 Mansfield Amendment restricting DOD to mission-related research. NSF becomes the leading funder of materials research at U.S. universities.

1971 – February 1

Research Applied to National Needs, or RANN, is established. The sometimes-controversial effort seeks to solve contemporary domestic problems like pollution, transportation and the energy crisis through the application of academic research. It is disbanded in 1978.

1971 – September 10

NSF Director William McElroy announces the foundation's commitment to improving the quality of science education and strengthening the research capability at Historically Black Colleges and Universities, or HBCUs.

1972

Launch of the "Science and Engineering Indicators," a biennial report to Congress and the president, composed by the National Center for Science and Engineering Statistics.

1972 – February 1

H. Guyford Stever assumes the directorship of NSF (1972–1976).

1973

NSF takes over support of the deep-sea research submersible Alvin. Built for the Navy in 1964 and still working today, Alvin will discover life in the extreme environment of deep-sea vents in 1977 and complete its 5,000th dive in 2018.

1975 – August 9

The Alan T. Waterman Award is established by Congress. The award recognizes an outstanding young researcher in any of the fields supported by NSF.

1977 – May 3

Richard C. Atkinson is confirmed by the Senate to be NSF director (1977–1980).

1978 – January 19

To improve the geographic distribution of grants and help underrepresented states improve research competitiveness, the National Science Board approves the Experimental Program to Stimulate Competitive Research, or EPSCoR. May 1979 saw the first grants.

1980s

There are some five foundational patents from which additive manufacturing, also known as 3D printing, arose. NSF was critical to at least three of these. The first filed was for selective laser sintering in 1986. Others were sheet lamination and binder jetting.

1980s

Buckyballs are clusters of 60 carbon atoms, forming a polyhedral structure, like a soccer ball. Such carbon structures are fundamentally novel materials. In 1996, U.S. researchers Robert F. Curl Jr. and Richard E. Smalley, funded by NSF, shared two-thirds of the Nobel Prize in chemistry.

1980s

Challenged to improve research and education in engineering and to shorten the time between discovery and application, in 1985, NSF launches the Engineering Research Centers. In 1987, the Science and Technology Centers expand the concept to other disciplines.

1980s

Research funded by NSF at the National Center for Atmospheric Research and at universities is instrumental in the development of Doppler radar as a meteorological research tool, including mobile Doppler on Wheels. Doppler radar is commonly displayed in weather prediction and reporting.

1980 – September 23

John B. Slaughter is confirmed by the Senate as director of NSF (1980–1982).

1981 – March 8

In a major reorganization, NSF establishes the Directorate for Engineering, giving new emphasis to engineering research.

1982 – June 21

NSF-funded researchers announce the discovery of the oldest known hominids. The approximately 4-million-year-old specimens are of Australopithecus afarensis, whose best known member is Lucy.

1982 – November 3

Edward A. Knapp becomes director of NSF (1982–1984).

1982 – 1989

In 1982, NSF's education funding is cut, but global competition leads President Reagan to increase K-12 funding and create the NSF-administered Presidential Awards for Excellence in Mathematics and Science Teaching. By 1990, the Education and Human Resources directorate is established.

1984 – August 6

Erich Bloch is confirmed by the Senate as director of NSF (1984–1990).

1985 – February 25

NSF announces funds for National Advanced Scientific Computing Centers. The centers enable researchers to model everything from molecules to the structure of the early universe. NSF links the centers and its regional university network to form NSFNET, forerunner of the internet.

1985 – 1986

NSF establishes supercomputing centers at Cornell, the University of Illinois, Carnegie Mellon University/University of Pittsburgh, University of California San Diego and Princeton Univeresity. Since then, NSF has supported many other supercomputers like Stampede in Texas and Blue Waters in Illinois.

1986

In 1965, an NSF-funded investigator discovered a bacterium in the extreme temperatures of the hot springs in Yellowstone Park. By 1986, this leads to a DNA polymerase that can amplify tiny amounts of DNA, a powerful tool for courts and biologists alike.

1987 – November 24

NSF announces the awarding of the NSFNET Cooperative Agreement to Merit, IBM and MCI. With additional support from the state of Michigan, the agreement will result in the building of a new, high-speed NSFNET backbone, the foundation for the internet.

1990s

For decades, NSF has supported informal STEM education, including such fun and innovative television shows as the "The Magic School Bus" to which NSF committed support in 1991.

1990s

Computer visualization techniques, such as computer graphics, animation and virtual reality, are pioneered with NSF support. Weather patterns, medical conditions and mathematical relationships are only some of the uses to which virtual reality can be applied.

1990s

Since the development of functional magnetic resonance imaging, a kind of MRI, in the early 1990s, NSF has supported numerous fMRI studies that have resulted in a deeper understanding of human cognition and brain function across a spectrum of areas.

1990s

Qualcomm revolutionizes cellphone technology with CDMA wireless technology and becomes a Fortune 500 company. In the 1980s, Qualcomm received two grants from NSF's Small Business Innovation Research program.

1991

NSF-funded researchers at Penn State University discover the first of three extra solar planets using radio telescopes. Two of these planets are similar in mass to the Earth. The third has roughly the mass of the moon.

1991 – March 4

Walter E. Massey becomes director of NSF (1991–1993).

1991 – October 24

NSF's new Directorate for Social, Behavioral and Economic Sciences is announced.

1992

Two sites for the Laser Interferometer Gravitational-Wave Observatory are announced, one at Livingston, Louisiana, and one at Hanford, Washington.

1992

The Big Bang theory is confirmed. For this 1992 discovery of the basic form and small variations in the cosmic microwave background radiation, NSF-funded researcher George Smoot of the University of California, Berkeley will share the 2006 Nobel Prize in physics with John Mather of NASA.

1993

NSF-funded Andrew Wiles presents the first and only novel proof of mathematician Pierre de Fermat's deceptively simple proposition he called his "last theorem." This contributes to secure communications and cryptography.

1993

NSF-funded scientists detect volcanic eruptions on the Juan de Fuca Ridge. By using U.S. Navy hydrophone arrays, oceanographers can monitor in real time volcanic eruptions on the ridge. NSF supports these and other technologies and their applications to volcanoes.

1993 – October 7

Neal F. Lane is confirmed as director of NSF (1993–1998).

1994

NSF joins the Department of Defense and the National Aeronautics and Space Administration in funding new technologies for digital libraries, making more information available over the internet.

1994 – August 24

NSF announces the Advanced Technological Education program, an effort to improve the education of technicians in technologically advanced fields such as gas metal arc welding.

1998

Two teams of NSF-supported astronomers discover that not enough matter exists to slow the universe's expansion. Instead, a mysterious force — dark energy, which accounts for almost three-fourths of the universe's mass-energy — expands it at an increasing rate (2011 Nobel Prize in physics).

1998 – May 22

Rita R. Colwell is confirmed as director of NSF (1998–2004).

1998 – September 28

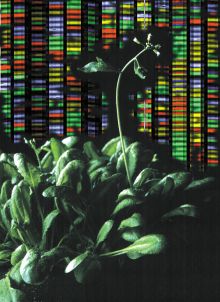

NSF announces major support for plant genome research, including important crops like corn, soybeans, tomatoes and cotton. This follows a multi-agency, multinational, decadelong effort to sequence and locate every gene in the model plant Arabidopsis thaliana.

2000 – October 31

Responding to the White House Initiative on Tribal Colleges and Universities, NSF provides grants through the new Rural Systemic Initiative to improve science, mathematics and technology education in K-12 schools on tribal reservations.

2001

With NSF funding, physicists and ophthalmologists at the University of Michigan develop a procedure with an ultrafast laser for improved LASIK eye surgery. They make significant improvements to interventions in both laser microsurgery and LASIK eye surgery.

2001

With a new push to improve education in science, technology, engineering and mathematics, NSF coins the STEM acronym, replacing the less easily said SMET.

2001 – September 19

NSF establishes six major centers for nanoscale science and engineering research. With nanotechnology as a cross-disciplinary approach to science and engineering of the very small, NSF invests effectively in a multitude of researchers and new collaborations.

2003

NSF establishes a Biomimetic MicroElectronic Systems Engineering Research Center. These use fundamental principles in biology to develop novel science and engineering based on nature, with a goal to create new machine-human interfaces for the understanding and treatment of disease.

2004

Breakthroughs lead to the new field of optogenetics, a research technique that enables scientists to use light to precisely control the activity of individual cells within living tissues, effectively turning them "on" or "off." In 2010, this technique was named the "Method of the Year" by the journal Nature Methods.

2004 – November 24

Arden L. Bement Jr. is sworn in as director of NSF (2004–2010).

2005

With funding from the Partnerships for International Research and Education program, U.S. and African scientists study the largest geophysical anomaly inside the Earth: the African Superplume, a huge mass of hot rock rising from the core-mantle boundary.

2007

Advances in remote, real-time, speech-to-text translation (C-PRINT) allow NSF-funded researchers to develop inexpensive smartphone technology to improve communication between hearing professors and deaf or hard-of-hearing students in lab and field classes.

2007

The South Pole Telescope — the largest radio telescope ever built in Antarctica — collects its first observations. The telescope stands 75 feet tall, is 33 feet across and weighs 280 tons.

2008 – October 8

The discovery and development of green fluorescent protein, an amazingly useful tool that allows scientists to track cellular processes, is recognized with the Nobel Prize in chemistry. NSF supported research on this jellyfish protein beginning in the 1960s and supported all three of the Nobel laureates.

2009

An international team of scientists announces its discovery and reconstruction of a fairly complete skeleton of the hominid Ardipithecus ramidus, dating to 4.4 million years ago. Nicknamed Ardi, the female skeleton is 1.2 million years older than the skeleton of Lucy.

2010

NSF-supported researchers use economic matching theory to develop a kidney exchange program that dramatically improves efficiency and doctors' ability to match organs. For his work in this area, Alvin Roth shares the 2012 Nobel Memorial Prize in Economic Sciences.

2010 – September 29

Subra Suresh is confirmed as NSF director (2010–2013).

2011

NSF solicits proposals for its part of the multiagency National Robotics Initiative to accelerate the development and use of assistive robots. NSF has since supported research in robotics in such burgeoning areas as search-and-rescue and reconnaissance, for example.

2011 – July 28

NSF announces the new Innovation Corps (I-Corps) program to move from discoveries to process or product. These public-private partnerships bring together NSF-funded researchers with entrepreneurs and businesses.

2012

A natural immune system found in many bacteria is characterized and repurposed as a powerful new biological tool: CRISPR. NSF-funded researchers develop the new technique that enables the rewriting of specific genetic information.

2012

The Large Hadron Collider at CERN announces the detection of the Higgs boson, a long-predicted subatomic particle. NSF had supported approximately 400 scientists at U.S. universities who had participated in LHC experiments and specifically helped to design, build and operate the particle detectors.

2013

The R/V Sikuliaq, the NSF-funded, next-generation arctic sea vessel, is launched. It supports dozens of researchers with onboard laboratories and specialized deck equipment, enabling multidisciplinary studies in high latitude open seas and near-shore regions.

2013 – April 2

The White House announces an initiative called Brain Research through Advancing Innovative Neurotechnologies. The BRAIN Initiative is an effort by federal agencies and private partners to support and coordinate research to understand how individual brain cells and complex neural circuits interact at the speed of thought.

2014 – March 12

France A. Córdova is confirmed as NSF director (2013–2020).

2015

In 2015, the National Ecological Observatory Network begins operation. A precedent-setting, multidisciplinary infrastructure, NEON generates snapshots of ecosystem health by measuring ecological activity in strategic locations throughout the U.S.

2016

An NSF-funded researcher develops a tool that examines a child's brain waves and can predict reading problems, such as dyslexia, before they appear. This is important because health interventions for children are most effective when they begin early.

2016

As part of NSF's Quantum Leap Big Idea and to secure communication, NSF's Office of Emerging Frontiers and Multidisciplinary Activities awards $12 million to develop systems that use photons in pre-determined quantum states to encrypt data.

2016 – February 11

NSF and the Laser Interferometer Gravitational-Wave Observatory announce the direct observation of gravitational waves resulting from merging black holes approximately 1.3 billion light-years away. LIGO confirms a major prediction of Einstein's general theory of relativity (2017 Nobel Prize in physics).

2016 – May

NSF unveils 10 Big Ideas intended to shape new areas of research, address critical gaps in the nation's research enterprise and tackle grand challenges of a scientific or societal nature.

2016 – September

NSF issues the first awards for NSF INCLUDES (Inclusion across the Nation of Communities of Learners of Underrepresented Discoverers in Engineering and Science), which aims to improve access to STEM education.

2018 – July 12

NSF's IceCube Neutrino Observatory in Antarctica, verified by ground- and space-based telescopes, produces the first evidence of a source of high-energy cosmic neutrinos, answering a century-old astrophysics question with new multi-messenger tools.

2018 – September

NSF announces new measures to protect the research community from harassment, publishing new terms and conditions for awardees. NSF leads the way in addressing this problem in order to promote safe, productive research and education environments for current and future scientists and engineers.

2019

The White House issues "Executive Order on Maintaining American Leadership in Artificial Intelligence." Funding AI research since the early 1960s, NSF is a central player, with earlier breakthroughs in disaster predictions, medical procedures and more.

2019 – April 10

The Event Horizon Telescope project and NSF hold a press conference to reveal the first image of a black hole. EHT scientists captured the image, outlined by hot gas emissions swirling near its event horizon.

2020 – June 18

Sethuraman Panchanathan confirmed as director.

2022 – March 16

NSF establishes the Directorate for Technology, Innovation and Partnerships — its first new directorate in 30 years — to bridge curiosity-driven and use-inspired research and grow industry, create new jobs, and cultivate a highly skilled STEM workforce across the nation.

2022 – May 12

NSF, with the Event Horizon Telescope Collaboration, hold a press conference to unveil the first image of Sagittarius A*, the supermassive black hole at the center of the Milky Way galaxy.

Additional resources

Science: The Endless Frontier (75th Anniversary Edition)

View a PDF of the file or purchase a printed version.

National Science Foundation Act of 1950

This legislation, Public Law 81-507, signed by Truman on May 10, 1950, established NSF.

Address to the Centennial Anniversary AAAS

Truman's September 1948 speech to the Centennial AAAS Annual Meeting in Washington, D.C. This speech is credited with giving rise to NSF's creation.

The National Science Foundation: A Brief History

A 1994 report by George T. Mazuzan, former NSF historian.

NSF's history wall guide

This beautiful mural provides a visual history of NSF, spanning seven decades of scientific discovery and innovation and depicting the agency's impact on the nation.